How to Install and Configure Apache Hadoop on Debian 10

Apache Hadoop is an open source, Java-based software platform that manages data processing and storage for big data applications. Hadoop works by distributing large data sets and analytics jobs across nodes in a computing cluster, breaking them down into smaller workloads that can be run in parallel. Hadoop can process structured and unstructured data and scale up reliably from a single server to thousands of machines.

Update the system

Update the system packages with the latest version by executing the following command and reboot the system once updated.

apt-get update -y

reboot Installing Java

Apache Hadoop is an application based on JAVA programming, so we need to install JAVA with the following command.

apt-get install default-jdk default-jre -yVerify the JAVA version once installation is complete.

java -versionOutput:

root@vps:~# java -version

openjdk version "11.0.11" 2021-04-20

OpenJDK Runtime Environment (build 11.0.11+9-post-Debian-1deb10u1)

OpenJDK 64-Bit Server VM (build 11.0.11+9-post-Debian-1deb10u1, mixed mode, sharing) Creating hadoop user

We will now create hadoop User and Setup Passwordless SSH for Hadoop user.

For this, simply follow the below instructions,

adduser hadoopOutput:

root@vps:~# adduser hadoop

Adding user `hadoop' ...

Adding new group `hadoop' (1000) ...

Adding new user `hadoop' (1000) with group `hadoop' ...

Creating home directory `/home/hadoop' ...

Copying files from `/etc/skel' ...

New password:Swith to Hadoop user once the user has been created.

su - hadoopOutput:

root@vps:~# su - hadoop

To run a command as administrator (user "root"), use "sudo <command>".

See "man sudo_root" for details.

hadoop@vps:~$Run the following command to generate SSH key.

ssh-keygen -t rsaOutput:

root@vps:~# su - hadoop

hadoop@vps:~$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/hadoop/.ssh/id_rsa):

Created directory '/home/hadoop/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/hadoop/.ssh/id_rsa.

Your public key has been saved in /home/hadoop/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:3tHghkR43tqj7Dt8E1yGC73HnseAJYHz8EeJQbKGOQI hadoop@vps.server.com

The key's randomart image is:

+---[RSA 2048]----+

| E .oooo . |

| . .+=oo o |

| . +o+B.+ |

| . +ooBo= |

| S=+@. |

| ..oO.+ |

| o...= + |

| = o o o |

| .o+ . . |

+----[SHA256]-----+You have to add the public key of your computer to the authorized_keys file of the computer also give the permission to the authorized_keys file.

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

chmod 0600 ~/.ssh/authorized_keysVerify the passwordless SSH connection with following command.

ssh Server's_IP_Address Install Hadoop

Switch to hadoop user and download the latest version of Hadoop using follwing "wget" command.

su - hadoop

wget https://downloads.apache.org/hadoop/common/hadoop-3.3.0/hadoop-3.3.0.tar.gz Extract the downloaded "tar" file with following command.

tar -xvzf hadoop-3.3.0.tar.gzNext, switch back to root user for the below commands. We will move the extracted files to a specific directory.

su root

cd /home/hadoop

mv hadoop-3.3.0 /usr/local/hadoop/home/hadoop path may differ in case you have a different username.

Create the log directory to stotre the "Apache Hadoop" logs.

mkdir /usr/local/hadoop/logsChange the ownership of /usr/local/hadoop directory to hadoop and switch back to hadoop user.

chown -R hadoop:hadoop /usr/local/hadoop

su hadoopEnter the edit mode to ".bashrc" and define the Hadoop environment variables by adding the following content to the end of the file.

nano ~/.bashrcAnd add the follwing configuration to the end of the file.

export HADOOP_HOME=/usr/local/hadoop

export HADOOP_INSTALL=$HADOOP_HOME

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export YARN_HOME=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib/native"Run the follwing command to activate the added environment variables.

source ~/.bashrc Configure Hadoop

If you are new to Hadoop and want to explore basic commands or test applications, you can configure Hadoop on a single node. Configure Java Environment Variables.

Next, you will need to define Java environment variables in hadoop-env.sh to configure YARN, HDFS, MapReduce, and Hadoop-related project settings.

To locate the correct path of Java by using the following command.

which javacOutput:

hadoop@vps:~$ which javac

/usr/bin/javacNext, find the OpenJDK directory with the following command.

readlink -f /usr/bin/javacOutput:

hadoop@vps:~$ readlink -f /usr/bin/javac

/usr/lib/jvm/java-11-openjdk-amd64/bin/javacNext, edit the hadoop-env.sh file and define the Java path.

nano $HADOOP_HOME/etc/hadoop/hadoop-env.shAnd add the follwing configuration to the end of the file.

export JAVA_HOME=/usr/lib/jvm/java-11-openjdk-amd64

export HADOOP_CLASSPATH+=" $HADOOP_HOME/lib/*.jar"Need to download the Javax activation file by running the following command.

cd /usr/local/hadoop/lib

sudo wget https://jcenter.bintray.com/javax/activation/javax.activation-api/1.2.0/javax.activation-api-1.2.0.jarOutput:

root@vps:~# cd /usr/local/hadoop/lib

root@vps:/usr/local/hadoop/lib# sudo wget https://jcenter.bintray.com/javax/activation/javax.activation-api/1.2.0/javax.activation-api-1.2.0.jar

--2021-06-14 12:04:58-- https://jcenter.bintray.com/javax/activation/javax.activation-api/1.2.0/javax.activation-api-1.2.0.jar

Resolving jcenter.bintray.com (jcenter.bintray.com)... 34.95.74.180

Connecting to jcenter.bintray.com (jcenter.bintray.com)|34.95.74.180|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 56674 (55K) [application/java-archive]

Saving to: ‘javax.activation-api-1.2.0.jar’

javax.activation-ap 100%[===================>] 55.35K --.-KB/s in 0.002s

2021-06-14 12:04:58 (30.5 MB/s) - ‘javax.activation-api-1.2.0.jar’ saved [56674/56674]Next, Verify the hadoop version.

hadoop versionOutput:

root@vps:~# cd /usr/local/hadoop/lib

root@vps:/usr/local/hadoop/lib# sudo wget https://jcenter.bintray.com/javax/activation/javax.activation-api/1.2.0/javax.activation-api-1.2.0.jar

--2021-06-14 12:04:58-- https://jcenter.bintray.com/javax/activation/javax.activation-api/1.2.0/javax.activation-api-1.2.0.jar

Resolving jcenter.bintray.com (jcenter.bintray.com)... 34.95.74.180

Connecting to jcenter.bintray.com (jcenter.bintray.com)|34.95.74.180|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 56674 (55K) [application/java-archive]

Saving to: ‘javax.activation-api-1.2.0.jar’

javax.activation-ap 100%[===================>] 55.35K --.-KB/s in 0.002s

2021-06-14 12:04:58 (30.5 MB/s) - ‘javax.activation-api-1.2.0.jar’ saved [56674/56674] Configure core-site.xml File

To set up Hadoop you need to specify the URL for your NameNode as following.

nano $HADOOP_HOME/etc/hadoop/core-site.xmlAnd add the follwing configuration to the end of the file.

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://0.0.0.0:9000</value>

<description>The default file system URI</description>

</property>

</configuration> Configure hdfs-site.xml File

Need to define location for storing node metadata, fsimage file, and edit log file. Configure the file by defining the NameNode and DataNode storage directories.

Before configure create a directory for storing node metadata.

mkdir -p /home/hadoop/hdfs/{namenode,datanode}

chown -R hadoop:hadoop /home/hadoop/hdfsEdit the hdfs-site.xml file and define the location of the directory as follows.

nano $HADOOP_HOME/etc/hadoop/hdfs-site.xmlAnd add the follwing configuration to the end of the file.

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.name.dir</name>

<value>file:///home/hadoop/hdfs/namenode</value>

</property>

<property>

<name>dfs.data.dir</name>

<value>file:///home/hadoop/hdfs/datanode</value>

</property>

</configuration> Configure mapred-site.xml File

Use the following command to access the mapred-site.xml file and define MapReduce values.

nano $HADOOP_HOME/etc/hadoop/mapred-site.xmlAnd add the follwing configuration to the end of the file.

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration> Configure yarn-site.xml File

You would need to edit the yarn-site.xml file and define YARN related settings.

nano $HADOOP_HOME/etc/hadoop/yarn-site.xmlAnd add the follwing configuration to the end of the file.

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration> Format HDFS NameNode

It is important to format the NameNode before starting Hadoop services for the first time.

hdfs namenode -format Start the Hadoop Cluster

First, start the NameNode and DataNode with the following command.

start-dfs.shOutput:

hadoop@vps:~$ start-dfs.sh

Starting namenodes on [0.0.0.0]

0.0.0.0: Warning: Permanently added '0.0.0.0' (ECDSA) to the list of known hosts.

Starting datanodes

localhost: Warning: Permanently added 'localhost' (ECDSA) to the list of known hosts.

Starting secondary namenodes [vps.server.com]

vps.server.com: Warning: Permanently added 'vps.server.com' (ECDSA) to the list of known hosts.You will have to make sure you have hostname

vps.server.compointing to the server's IP address in/etc/hosts.

Next, start the YARN resource and nodemanagers by typing.

start-yarn.shOutput:

hadoop@vps:~/hdfs$ start-yarn.sh

Starting resourcemanager

Starting nodemanagers

hadoop@vps:~/hdfs$Verify if all the daemons are active and running as Java processes.

jpsOutput:

hadoop@vps:~$ jps

30386 NameNode

31363 Jps

30916 ResourceManager

30472 DataNode

30652 SecondaryNameNode

31004 NodeManager Access Hadoop Web Interface

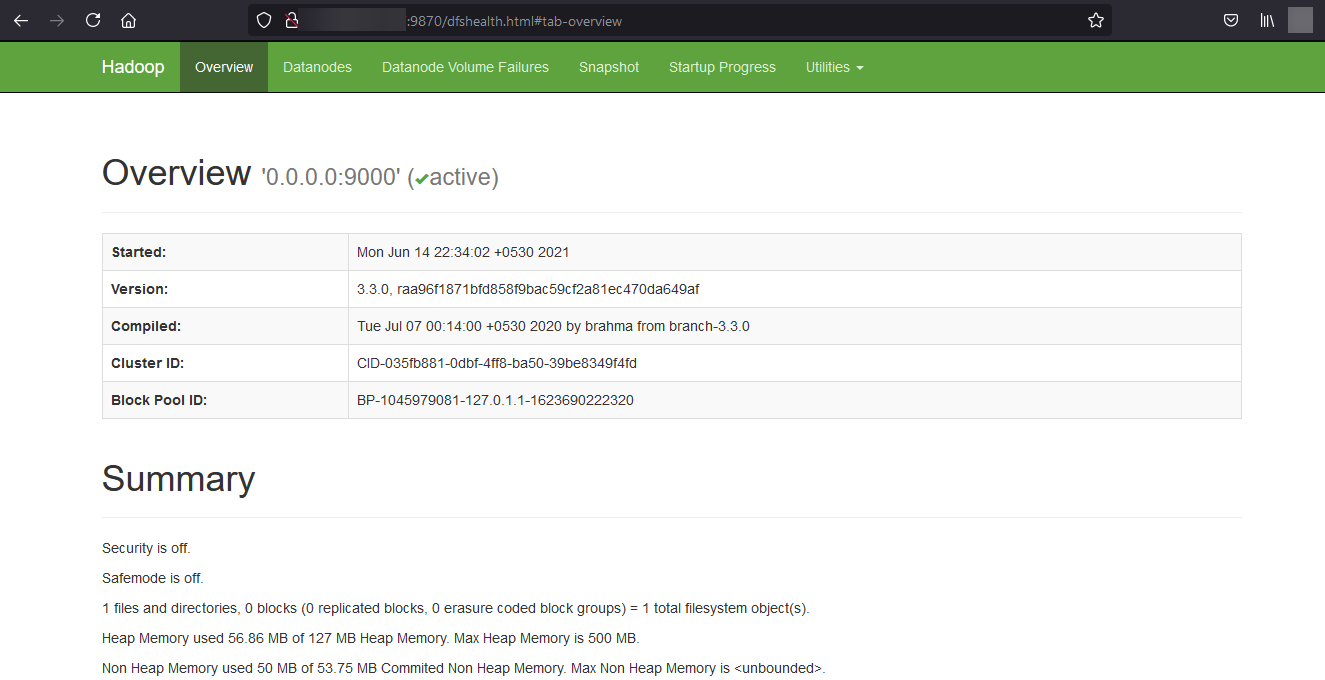

Navigate your localhost URL or IP to access Hadoop NameNode : http://your-server-ip:9870

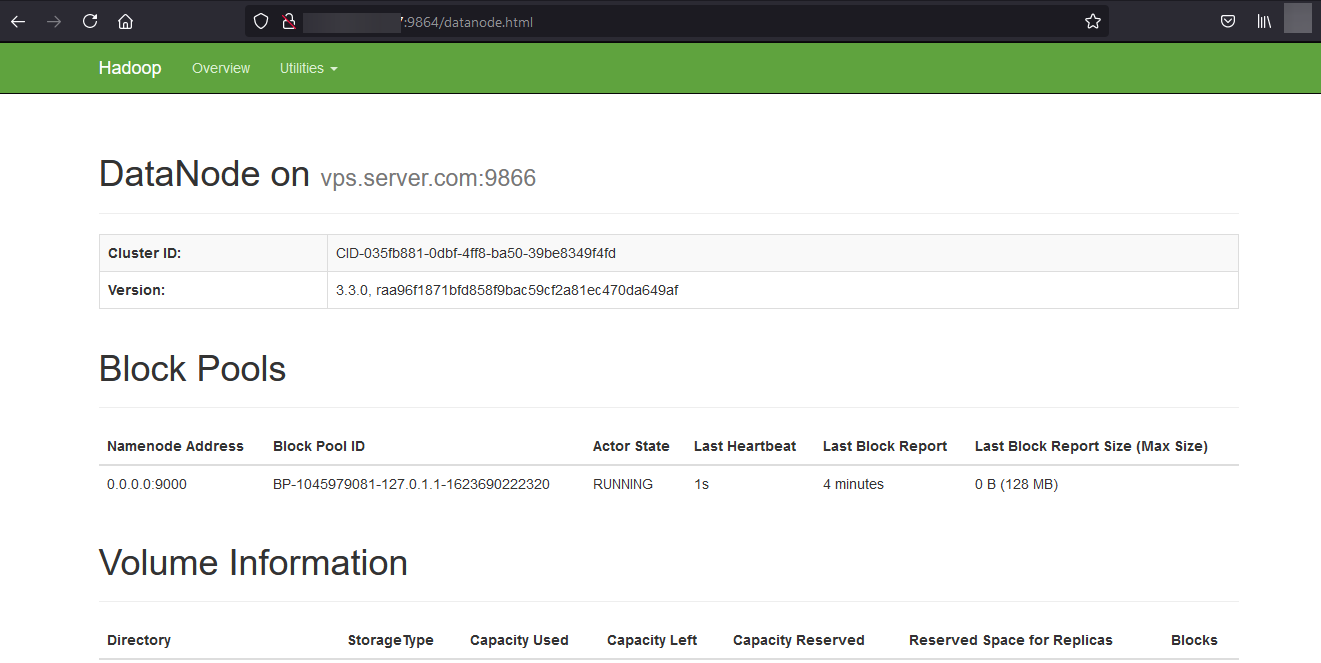

Navigate your localhost URL or IP to access individual DataNodes : http://your-server-ip:9864

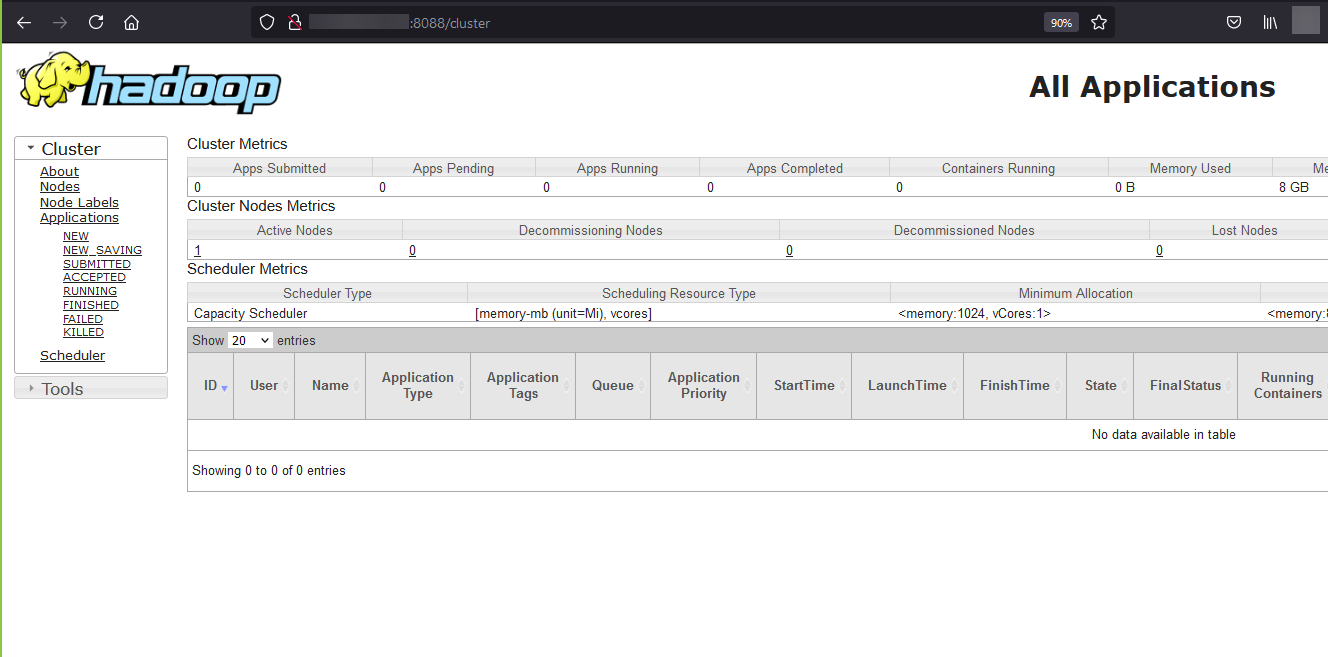

Navigate your localhost URL or IP to access the YARN Resource Manager: http://your-server-ip:8088

Done!

CrownCloud - Get a SSD powered KVM VPS at $4.5/month!

Use the code WELCOME for 10% off!

1 GB RAM / 25 GB SSD / 1 CPU Core / 1 TB Bandwidth per month

Available Locations: LAX | MIA | ATL | FRA | AMS